TL;DR

- Model quality matters, but it does not create business value on its own. Value shows up when AI can securely operate within real systems such as Outlook, Teams, local runtimes, enterprise applications and enterprise workflows.

- Agents need structure. MCP servers, plugins, skills, and integration layers are what turn a capable model into a usable desktop product.

- In projects like GePT-AI Studio, the hardest and most valuable work was not “making the AI smarter.” It was building the connective tissue: local bridges, setup flows, compiler detection, auth, deterministic actions, and safe integrations.

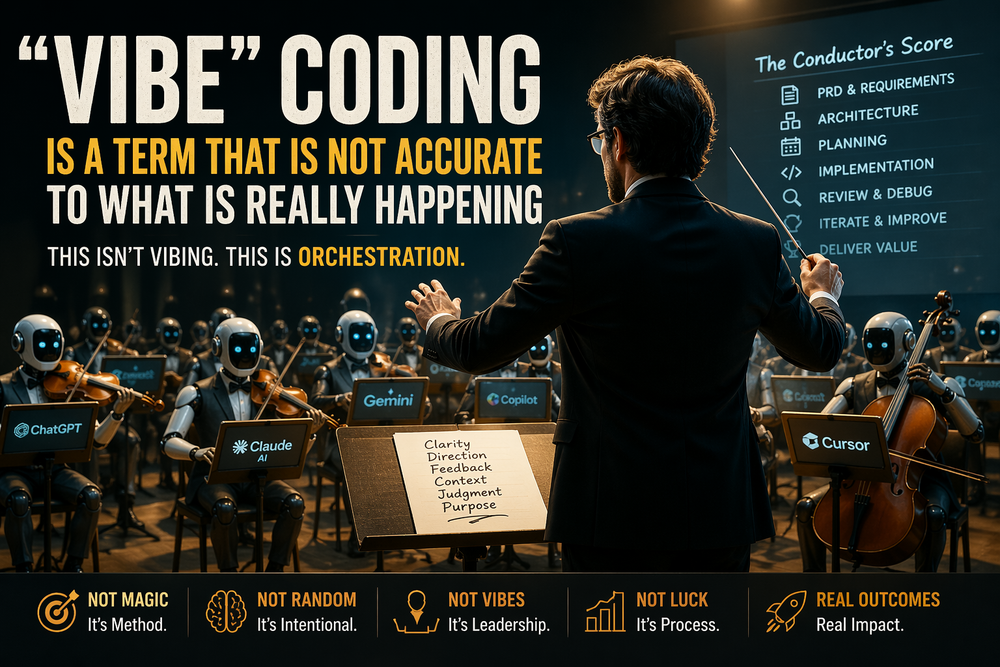

Across my recent vibe-coding projects, especially GePT-AI Studio, I kept running into the same lesson: the most important part of an AI desktop platform is usually not the model. It is the system around the model.

That may sound counterintuitive, since the market tends to rank models by benchmark scores, reasoning quality, latency, and tone. Those things do matter. A weak model makes everything worse. But once a model is good enough, the next order bit is not “Which model is 3% smarter?” It is “What can this platform actually do inside a user’s real environment?”

That is where agents, MCP servers, plugins, skills, and integrations stop being implementation details and become the actual product.

A model is intelligence. A platform is usable intelligence.

A model can answer questions. A platform has to complete work.

That distinction is easy to miss in demos. A model-only demo looks impressive because it can summarize, brainstorm, and generate text on command. But business users do not live inside isolated prompts. They live inside Outlook, Teams, internal tools, local files, authentication boundaries, security controls, and machine-specific runtime environments.

So the real test is not whether the AI sounds smart. The real test is whether it can:

- find the right context,

- invoke the right tool,

- act in the right system,

- respect permissions and guardrails,

- and hand the user a result they can trust.

That is exactly the kind of lesson that became obvious while building platform features around Teams integration, Outlook-style workflows, compiler detection, and integration setup. None of those pieces are glamorous in the way model comparisons are glamorous. All of them matter more once you are building a product instead of a demo.

Agents are only as useful as their action surface

People talk about agents as if agency itself is the breakthrough. In practice, an agent without reliable tools is just a model with ambition.

An agent becomes valuable when it can translate language into action in a controlled way. That means it needs a bounded action surface, explicit contracts, predictable failure modes, and a clean path back to the user when confidence is low.

In GePT-AI Studio, that meant thinking less about “autonomy” as a buzzword and more about deterministic capabilities. If the agent needs to search Teams messages, that should be a defined action. If it needs to post to a chat, that should be a defined action. If it needs to inspect whether the local machine can run a compile or toolchain task, that should be a defined action too.

This is where many AI products either become enterprise-ready or remain stuck as impressive prototypes. The difference is not whether the agent can “think.” The difference is whether the agent has a safe and useful interface to the world.

MCP servers are the contract layer, not just a protocol trend

This is why MCP matters.

The Model Context Protocol defines a standardized way for LLM applications to connect to external tools and data, including primitives for things like tools, prompts, and resources. That matters because AI platforms need a clean contract between model-facing behavior and system-facing behavior.

In practical product terms, MCP servers are useful because they create separation of concerns.

The model does not need to know how Teams authentication works, how a local executable is discovered, or how a message gets resolved to the right channel. It needs a stable interface. The MCP layer, or a local bridge built around the same pattern, becomes the translation layer between “what the model wants to do” and “what the platform can safely do.”

That pattern was especially compelling in desktop-platform work because desktop environments are messy in ways cloud-only demos are not. Local apps are installed differently. Permissions vary. Runtime availability varies. Machines have different configurations. User context is local. Enterprise controls are real.

That is where MCP-style design stops being theoretical. It becomes the backbone for exposing tools in a consistent way without turning the entire product into a tangled mass of one-off integrations.

Plugins and skills are where architecture becomes product

I increasingly think of plugins and skills as product design, not just extension mechanisms.

A plugin is the integration container. It owns the connection to an external system, the setup model, the auth shape, the runtime, and the discoverability. A skill is the narrower capability surface the agent can invoke once that integration exists.

That distinction mattered in GePT-AI Studio. For example, the MS Teams integration was treated as a default local plugin, not just a loose skill. It had to do real work: search messages, resolve users, teams, and channels, create or reuse chats, post messages, and open results in the installed Teams client. Under the hood, that kind of integration is not just “call the model.” It is plugin packaging, runtime configuration, bridge logic, auth handling, and carefully scoped commands.

That is a much more honest view of what makes AI software usable.

Users do not care whether a feature was implemented as a plugin, skill, server, or tool registration. They care that the capability exists, is easy to install, behaves predictably, and fits into the workflow they already use.

So when people ask what makes an AI desktop platform strong, I do not start with the model leaderboard. I start with questions like:

- What integrations can the user activate?

- How hard is setup?

- How explicit are the actions?

- Can the agent operate safely across local and cloud boundaries?

- Can the platform degrade gracefully when something is missing?

Those are product questions disguised as infrastructure questions.

Teams and Outlook made the lesson obvious

The Teams work made this very concrete.

Microsoft Graph supports Teams messaging scenarios such as chats, channels, and chat messages, and Teams deep links support navigation directly into specific locations like chats, messages, or tabs.

What mattered in practice, though, was not just that those APIs exist. What mattered was the end-to-end experience:

Can the AI find the right discussion?

Can it identify the correct people or channel?

Can it safely prepare or post the message?

Can it open the result in the desktop client so the user stays in their normal workflow?

That is business value. Not “the model produced polished prose.” The value is that a user can move faster inside a system they already depend on.

The same lesson applies to Outlook-style integration work. Microsoft Graph exposes mail and calendar data as first-class surfaces. But the real product question is not whether a model can draft an email. Any decent model can draft an email.

The real question is whether the platform can help the user in context:

- find the right thread,

- pull the relevant history,

- understand the calendar implications,

- draft something useful,

- and do it inside a governed workflow.

That is a completely different bar than pure generation quality.

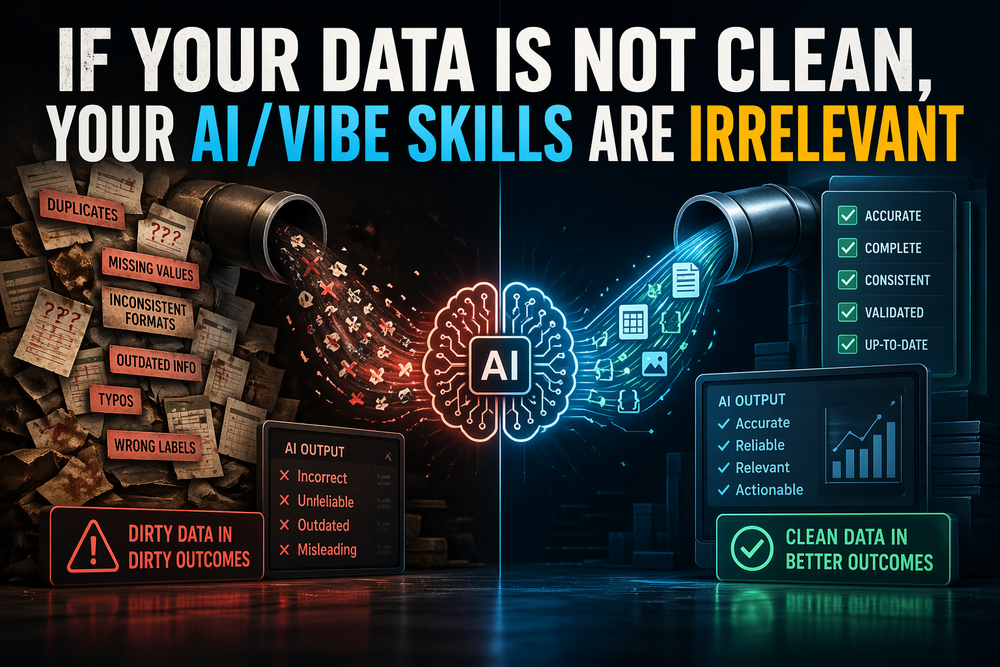

Compiler detection and integration setup are not side quests

Some of the highest-value work in AI desktop products looks boring from the outside.

Compiler detection is a good example. It sounds like plumbing. In reality, it is the difference between an AI coding flow that feels magical and one that collapses immediately in the real world.

If the platform cannot tell whether the machine has the right runtime installed, which executable is available, or how to launch the correct toolchain, then the model’s reasoning quality barely matters. The workflow breaks before intelligence becomes useful.

The same is true for the integration setup. If connecting a plugin is fragile, authentication is confusing, permissions are unclear, or local bridging is unreliable, the user does not experience “advanced AI.” They experience operational friction.

This is one of the biggest misconceptions in the current AI market: people overestimate the value of raw model intelligence and underestimate the value of workflow certainty.

A slightly better model in a weak integration shell usually loses to a slightly worse model in a platform that is connected, deterministic, and usable.

The business equation is multiplicative, not additive

I think about the AI desktop value as a simple multiplicative equation:

Business value = model quality × integration quality × workflow fit × trust

If any one of those terms is near zero, the whole system underperforms.

That is why “model quality matters, but usable integrations determine business value” is not just a slogan. It is a product rule.

A strong model helps with interpretation, summarization, ranking, extraction, planning, and language generation. But integrations are what turn those capabilities into actions users would actually pay for. Plugins and skills are what make those actions discoverable. MCP servers and local bridges are what make those actions structured and governable. Setup, compiler detection, and environment handling are what keep the system from failing at the exact moment the user needs it.

In other words: the model is the brain, but the integration layer is the nervous system.

And a brain without a nervous system does not ship product value.

Final thought

The future winners in AI desktop platforms will not be the teams that only chase the smartest model. They will be the teams that build the best action architecture around capable models.

That means agents with boundaries.

MCP servers with clean contracts.

Plugins with real setup and discoverability.

Skills with deterministic scope.

Integrations that meet users inside Outlook, Teams, local toolchains, and enterprise systems.

And all the unglamorous bridge work required to make those layers reliable.

That is the real backbone of an AI desktop platform.

And in my experience, that is where the product becomes real.