TL;DR

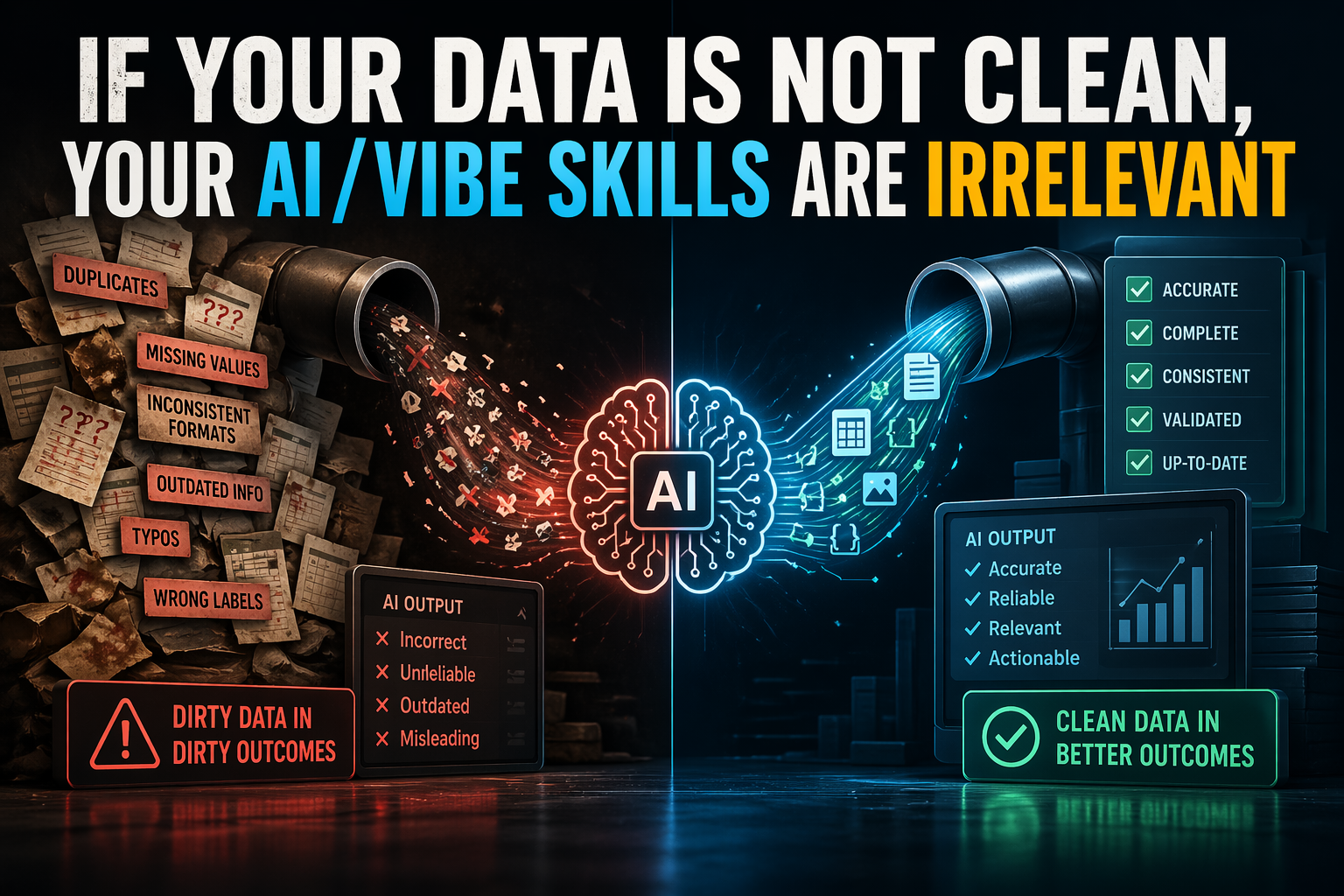

You cannot out-prompt bad data. If your ingestion pipeline pulls in duplicates, missing fields, broken labels, stale records, or inconsistent formats, your AI systems will produce confident but unreliable outputs. Prompting skill, model selection, and “vibe” cannot compensate for corrupted inputs; clean data is the real multiplier.

Everyone wants to talk about AI skill now.

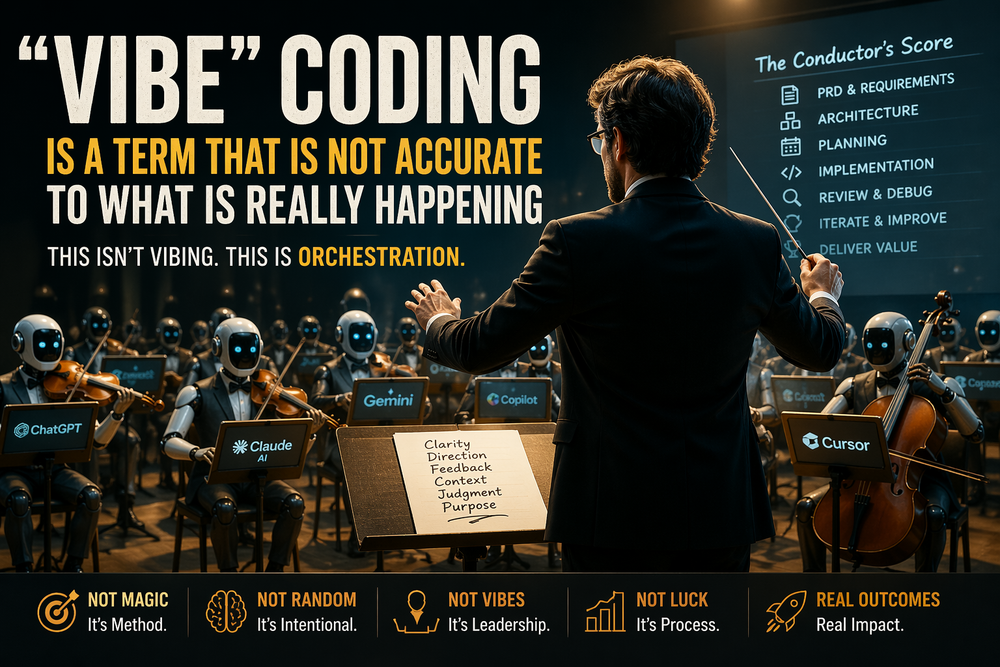

Prompting. Agent orchestration. RAG design. Workflow automation. “Vibe coding.” Tool use. Model switching. Stack selection.

But I keep coming to one major conclusion in my AI Organizational Transformation experience.

All of the above is fine. Those things matter. But there is a brutal truth beneath it all: if your data is dirty, your AI skills are mostly theater.

You can be excellent at prompting. You can know every trick for context windows, few-shot examples, retrieval tuning, and structured outputs. You can build impressive demos that feel smooth and intelligent. None of that changes the underlying constraint. If the system is ingesting garbage, it will emit garbage with better grammar.

This is not a model problem. It is a data reality problem.

The phrase “garbage in, garbage out” is old, but AI has made it feel new again because the outputs are now more persuasive. Traditional software usually fails loudly when the input is broken. AI often fails beautifully. It gives you an answer that sounds plausible, reads well, and appears thoughtful. That is exactly what makes dirty data so dangerous in AI systems: the failure mode is confidence, not collapse.

Consider a simple example. A company builds an internal AI assistant over its support knowledge base and ticket history. On paper, this looks like a smart use case. But the ingestion layer is messy. Articles are duplicated across multiple folders. Old procedures were never archived. Product names changed three times. Ticket tags are inconsistent. Dates are malformed. PDFs were OCR’d badly. Some records are missing key fields. A few high-volume ticket categories were mislabeled for months.

Now the team asks the assistant to summarize common issues and recommend next actions. The model does exactly what it is supposed to do: it synthesizes patterns from the material it was given. The problem is that the material is unreliable. So the assistant surfaces duplicate issues as if they are widespread, recommends outdated workflows, misses the current product taxonomy, and cites records that should never have been in scope.

Then someone says the prompts need work.

No. The data needs work.

Dirty ingestion contaminates the entire downstream system. It affects search, retrieval, ranking, embeddings, analytics, and generation. If the source documents are inconsistent, your vector index becomes inconsistent. If the metadata is broken, your filters are broken. If your labels are wrong, your fine-tuning signal is wrong. If your timestamps are unreliable, the system cannot distinguish current truth from historical noise. If customer records are duplicated, your personalization layer becomes a hallucination engine with a CRM attached.

This is why so many AI projects look impressive in a demo and disappointing in production. Demos are curated. Production is ingestion at scale.

And ingestion is where sloppiness compounds.

A single typo in a prompt may cause one bad answer. A broken ingestion pipeline causes millions of bad tokens, bad embeddings, bad joins, bad retrieval results, and bad decisions. It pollutes every layer at once. Worse, those layers start reinforcing each other. Bad data creates bad summaries. Those summaries get stored. Those stored outputs later become new context. The system begins training itself on its own misunderstandings.

That is how organizations drift from “some messy inputs” to “institutionalized synthetic confusion.”

The uncomfortable part is that data cleaning is not glamorous. It does not feel like AI. Nobody posts screenshots of schema normalization with the same excitement they post autonomous agents. Deduplication does not look innovative on a stage. Metadata hygiene is not sexy. But this is the work that separates serious systems from expensive improv.

If you care about reliable AI, then ingestion is not a back-office technical detail. It is the foundation. That means validating formats before storage. Enforcing schemas. Standardizing units. Resolving entity names. Removing duplicates. Tracking lineage. Monitoring drift. Flagging missing values. Versioning knowledge sources. Archiving stale content. Defining ownership for data quality instead of assuming “the AI team” will somehow absorb the problem later.

They will not. They cannot.

AI systems are amplifiers. They amplify what is present in the data: signal, noise, bias, inconsistency, omissions, and contradictions. Clean data makes AI look smart because the model has something coherent to reason over. Dirty data makes AI look magical for five minutes and incompetent for five months.

This also reframes what “AI skill” actually means.

Real AI maturity is not just knowing how to ask better questions. It is knowing whether the system has the right to answer at all. It is understanding when the retrieval set is contaminated. It is recognizing that output quality is often an ingestion problem wearing a model-shaped costume. It takes discipline to fix the pipe before polishing the prompt.

The best AI practitioners are rarely the ones with the cleverest prompts. They are the ones who respect the full chain: source quality, ingestion quality, transformation quality, retrieval quality, and only then generation quality. They know that prompt engineering without data engineering is cosmetics.

So yes, learn the tools. Learn prompting. Learn agents. Learn automation. Learn how to build with speed and intuition. Those are useful skills.

But do not confuse visible skill with foundational competence.

If your data is not clean, your AI/VIBE skills are irrelevant because the system is reasoning over a broken version of reality. And once reality is broken at ingestion, every downstream output is just a more polished expression of the same defect.

Clean data is not a nice-to-have. It is the precondition.

Everything else is performance.