TL;DR

- AI is becoming genuinely useful in malware detection, triage, and reverse engineering, and projects like Microsoft’s Project Ire show how far autonomous analysis has come.

- But detection is not sanitization. Microsoft explicitly notes that false positives and false negatives can occur with any threat protection solution, so an AI verdict alone should never be treated as a clean bill of health.

- In enterprise environments, the safer model is quarantine first, inspect deeply, sanitize deterministically, and release only on an explicit allowed verdict. OWASP recommends layered controls for file uploads, including antivirus or sandboxing and CDR where applicable.

- My conclusion: AI should be part of the file-security pipeline, but not the final authority on trust. For that, enterprises still need hard controls like file-type verification, sandboxing, policy enforcement, and Deep CDR-style reconstruction. And that is where my integration with OPSWAT MetaDefender Core will pay off!

The more I’ve explored AI-driven file scanning, the more I’ve come back to a simple distinction that gets blurred far too often: Can AI detect that a file looks suspicious? and Can AI make that file safe enough to release into an enterprise environment?

Those are not the same question.

From an enterprise protection standpoint, that difference matters. A lot. In the real world, security teams are not just dealing with obvious executables. They are dealing with resumes, invoices, PDFs, Office files, archives, partner uploads, vendor documentation, AI-generated attachments, and all the messy file types that flow through normal business operations every day. A file does not need to “look like malware” to be dangerous. It just needs to be trusted too early.

That has been one of the big takeaways in my own recent journey around OPSWAT, AI-based scanning, and Deep CDR-style workflows. I started out interested in whether AI could become the smarter scanner in the pipeline. I ended up focusing more on how enterprises should handle untrusted content before trust is ever granted.

Where AI really helps

To be clear, I do think AI has real value here.

Microsoft Research’s Project Ire is a strong example of the upside: an autonomous AI agent designed to analyze and classify software by using tools like decompilers and then determining whether the software is malicious or benign. That is not marketing fluff; it points to a real direction for the industry. AI can accelerate analysis, reduce manual triage, and help defenders understand suspicious files faster.

In practice, that means AI can be very good at things like:

- prioritizing suspicious files for deeper analysis

- spotting patterns that signatures alone might miss

- explaining why something appears risky

- helping analysts move faster when queues are full

And that matters because defenders are not the only ones using AI. Microsoft has also warned that threat actors are operationalizing AI across the attack lifecycle to improve speed, scale, and resilience. Enterprises should assume the file threat landscape is becoming more adaptive, not less.

Where AI falls short

But this is where the marketing narrative often outruns reality.

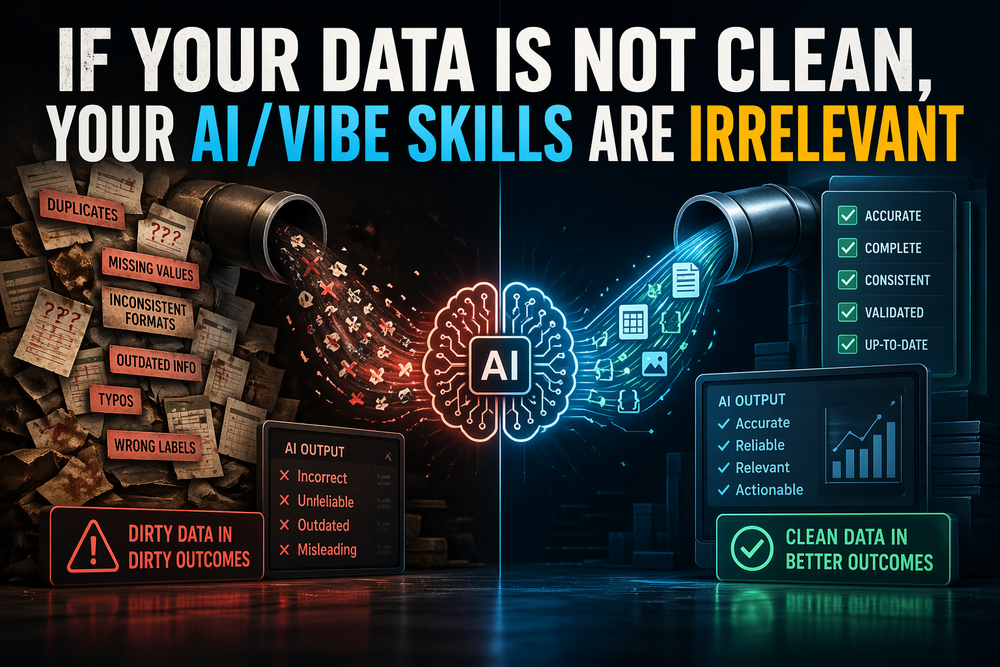

An AI model can assign risk. It can flag anomalies. It can even produce a convincing explanation of why a file may be malicious. What it cannot do, by itself, is turn a risky file into a trustworthy one simply because its confidence score looks good.

That is the core limit.

Microsoft’s own guidance is blunt on the broader point: false positives and false negatives can occur with any threat protection solution. That includes sophisticated, AI-assisted systems. So the enterprise mistake is not using AI; the mistake is treating AI as the final arbiter of trust.

There is also a deeper technical issue: detection and sanitization are different operations.

Detection asks, “Do I think this file is malicious?”

Sanitization asks, “Can I remove or neutralize unsafe elements and still deliver a usable file?”

Those are fundamentally different jobs. The first is probabilistic. The second needs to be much more deterministic.

That is why I’ve become increasingly skeptical of the idea that an enterprise should ever rely on an “AI-only sanitizer.” A model may be excellent at triage and still be the wrong control to place directly between an untrusted file and a production environment.

The enterprise model I trust more

The model I trust more is the one I kept circling back to in my OPSWAT exploration: quarantine first, then process, then release only if policy says yes.

That means the file does not go straight to a user, an endpoint, a workflow engine, or an LLM-powered automation agent. It lands in a controlled zone first. From there, the pipeline can validate the true file type, inspect archives, run malware scanning, execute sandboxing where needed, apply sanitization or regeneration, and keep a full audit trail of what happened.

OWASP’s File Upload Cheat Sheet aligns with that layered mindset. It recommends running uploaded files through antivirus or a sandbox where available, and running them through Content Disarm & Reconstruct for applicable types such as PDF and DOCX.

That is also why Deep CDR-style workflows make sense to me. OPSWAT describes Deep CDR as disarming and regenerating files, supporting embedded objects and recursive archive scanning, and sanitizing more than 200 file types. In other words, it is aimed at a different control point than pure detection: not just “spot the bad,” but “rebuild the file into something safer and usable.”

In the layered MetaDefender-style approach, that logic is reinforced by combining malware scanning and sandboxing with CDR rather than forcing one technique to do everything.

What changed in my thinking

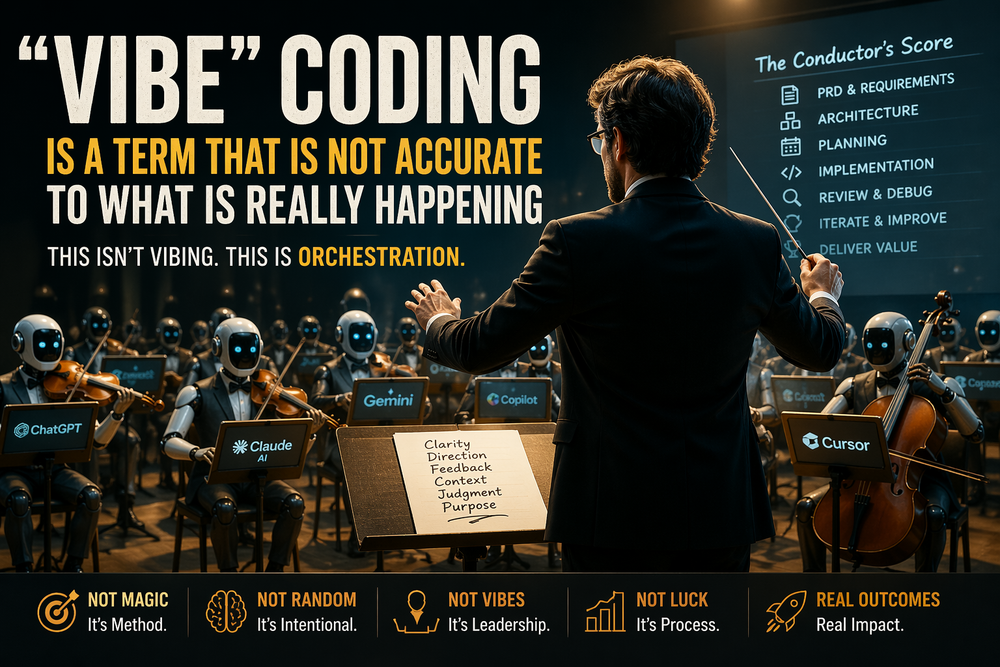

The biggest change in my thinking is that I no longer see AI-based scanning as the answer. I see it as an amplifier.

AI can improve file security. It can make analysis smarter. It can help identify edge cases, reduce analyst burden, and speed up decisions. But in an enterprise environment, the safest design still depends on controls that fail closed.

That means:

- do not trust file extensions alone

- do not let untrusted content flow straight to endpoints

- do not let an AI verdict be the sole release condition

- do use deterministic controls to strip, rebuild, isolate, and verify

That is where the real enterprise value is. Not in pretending AI has solved file trust, but in using AI intelligently inside a workflow that assumes untrusted content is hostile until proven otherwise.

Final answer

So, can AI sanitize files safely?

Not on its own, and not in the way enterprises should define “safe.”

AI can absolutely strengthen malware scanning. It can improve triage, analysis, and even autonomous classification. But safe enterprise handling of untrusted files still requires a layered architecture: quarantine, verification, multi-layer inspection, deterministic sanitization, auditability, and a strict release policy.

That is the promise and the limit.

And honestly, that has become the clearest lesson in my own journey through AI-driven scanning and OPSWAT-style workflows:

Trust nothing you download. Verify everything first.