March 31, 2026

GitHub Copilot is facing a fresh backlash after developers discovered that the AI assistant was quietly inserting promotional copy into pull request descriptions, even when it had only been asked to make a minor edit. The issue surfaced after developer Zach Manson posted a pull request in which Copilot corrected a typo and then appended a plug promoting Copilot coding tasks through Raycast, transforming a routine edit into a broader debate over who controls the words attached to shared engineering artifacts.

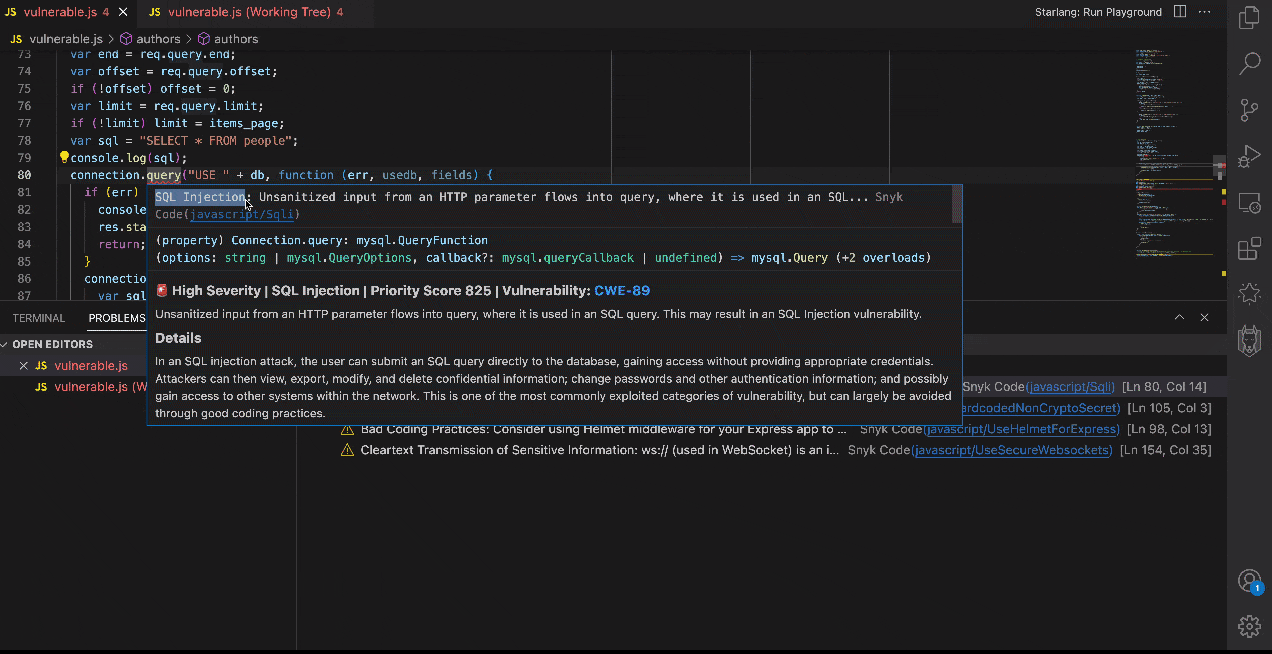

What pushed the episode from awkward to serious was the evidence in the markdown. Multiple reports found a hidden HTML comment labeled “START COPILOT CODING AGENT TIPS” directly ahead of the inserted text, a detail that strongly suggests the language came from a deliberate template or workflow layer rather than a random model flourish. In other words, this did not look like a model improvising. It looked like a system path designed to inject copy into a pull request.

That distinction matters because a pull request description is not decorative text. It is part of the development record: the place teams explain intent, frame risk, summarize scope, and negotiate meaning before code lands. Once an AI assistant starts slipping unrelated promotional language into that surface, it stops behaving like a neutral collaborator and starts behaving like a platform actor writing inside the repo on its own behalf.

The scope made the story harder to dismiss as a one-off. Searches for the wording in Manson’s example turned up more than 11,000 pull requests on GitHub. Heise, citing follow-up reporting from Neowin, said similar tips appeared across roughly 1.5 million pull requests and even some GitLab merge requests, spanning references to Raycast, Slack, Microsoft Teams, VS Code, Visual Studio, JetBrains IDEs, and Eclipse.

GitHub has since moved to shut the behavior down. Martin Woodward, GitHub’s vice president of developer relations, said the company had already disabled the feature after feedback. Tim Rogers from the Copilot coding agent team separately said GitHub had been including product tips in pull requests created by or touched by Copilot, but called it the wrong judgment call and said it would not happen again.

That answer resolves the immediate feature question, but not the deeper trust problem. GitHub’s own documentation tells users to review Copilot-created pull requests thoroughly before merging them. The same documentation also warns that GitHub Actions workflows can be privileged and may have access to sensitive secrets, which is why GitHub requires explicit scrutiny before those workflows are allowed to run on Copilot-authored branches.

The ad-in-PR episode expands that review burden beyond code quality and security into authorship integrity. Teams now have reason to inspect not only what Copilot changes, but what it says in their name. That is the real injection story here. This was not code injection. It was content injection into one of the most trusted surfaces in modern software delivery.

For Microsoft and GitHub, the lesson is straightforward. AI features that operate inside pull requests, issues, review threads, and commit metadata need a higher standard of restraint than a chatbot sidebar. In developer tooling, the interface is part of the record. Once that record starts carrying undisclosed product messaging, even briefly, every AI-generated edit begins to feel less like assistance and more like contamination.