TL;DR

UX has always been my weakest point in vibe coding. No matter how strong the instruction set, I’d spend hours tweaking code and still miss the mark. The shift was simple: stop coding UX first.

Prototype it with AI instead. Iterate visually, lock the design, then build. Faster, cleaner, and significantly more accurate.

There’s a moment in almost every project where things start to feel… off.

Not broken. Not failing. Just not right.

For me, that moment almost always shows up in the same place: the UI.

I can build systems. I can architect workflows. I can stand up agents, orchestrators, and instruction sets that actually behave the way they’re supposed to. But when it comes to UX? That’s where things have historically unraveled a bit.

And what makes it more frustrating is that it doesn’t fail loudly. It fails quietly.

The application works. The logic is sound. The features are there. But the experience feels clunky, misaligned, or just slightly disconnected from what I had in my head.

That gap—that translation failure between intent and interface—has been my consistent downfall.

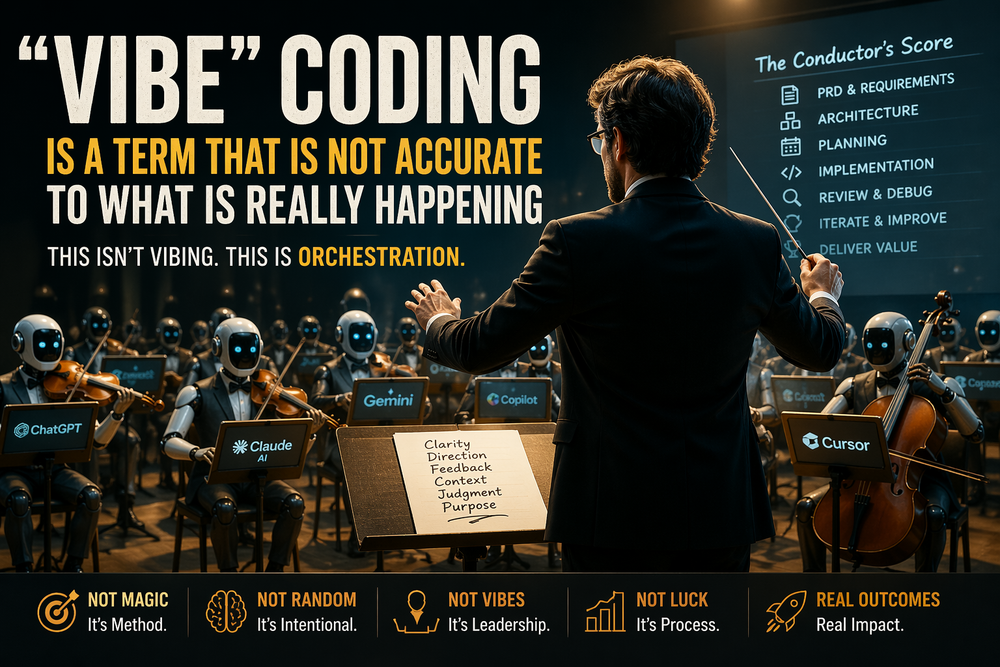

Vibe Coding Works… Until It Hits UX

Vibe coding, especially when you add structure, is incredibly powerful. A solid PRD, layered instruction sets, proper orchestration, and continuous review can take you surprisingly far. You can build real systems quickly, and more importantly, you can evolve them just as fast.

But UX doesn’t behave like backend logic.

You can describe a workflow in exact terms. You can define inputs, outputs, and edge cases. The model will generally get you close, sometimes even perfect.

But try describing “clean,” “intuitive,” or “enterprise-grade UI,” and suddenly you’re in a different problem space entirely.

Those words are subjective. They’re contextual. And more importantly, they’re visual.

So what ends up happening?

You write more instructions. You get more specific. You try to control layout through text. You regenerate code. You tweak. You rebuild.

And somehow, you’re still not there.

The Cost of Fixing UX in Code

This is where I kept burning time.

Every iteration required friction:

You adjust the instruction set.

You regenerate the UI code.

You reload the app.

You evaluate the result.

Repeat.

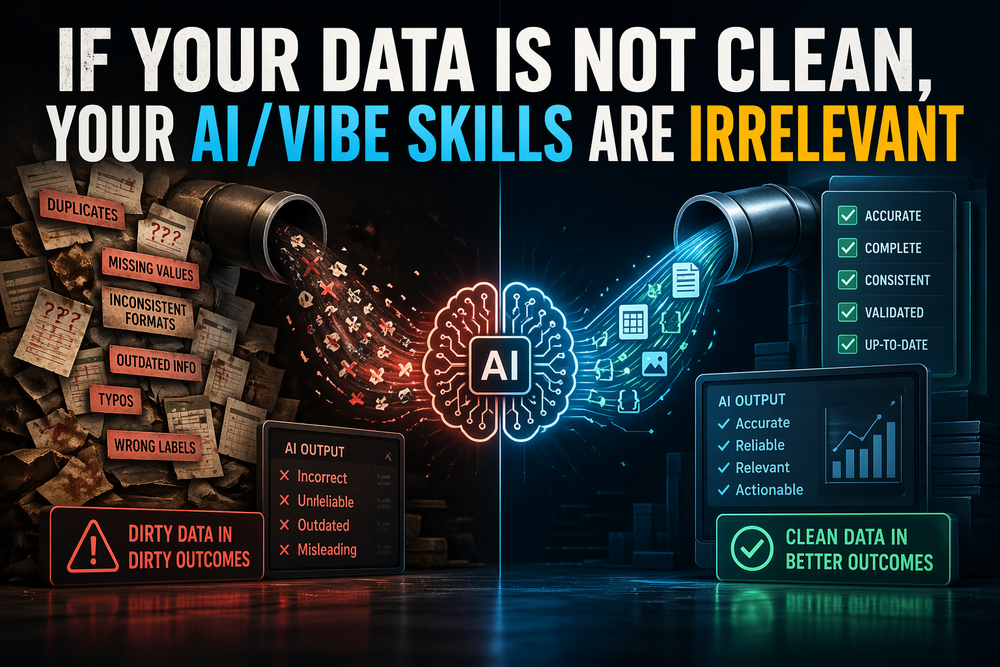

And the worst part? Even after all that, the result often still misses the intent. Not because the model failed, but because I was asking it to interpret something inherently visual through purely textual constraints.

From a systems perspective, it’s inefficient.

From a human perspective, it’s exhausting.

The Realization

At some point, it clicked.

I wasn’t actually solving the problem—I was fighting the medium.

I was trying to design visually… inside code.

That’s backwards.

Traditional teams don’t do this. They separate concerns. Design happens in tools like Figma. Development happens afterward. There’s a reason for that separation—it reduces ambiguity.

Vibe coding collapses that boundary, which is powerful, but it doesn’t mean you should ignore it entirely.

So instead of continuing to brute-force UX through instruction sets, I changed the approach.

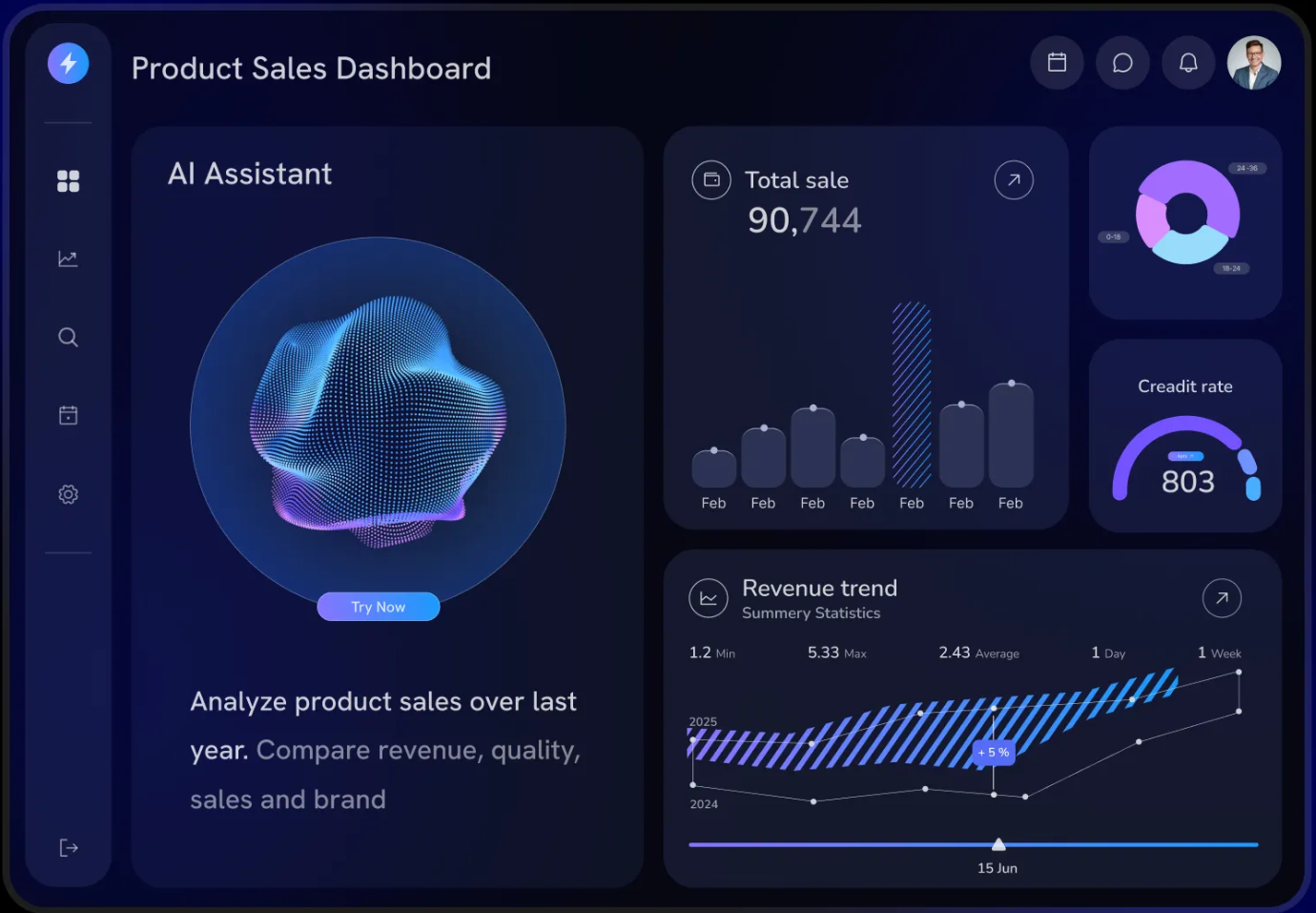

Enter ChatGPT as a UX Prototyping Tool

The shift was simple, but the impact was not.

Instead of telling the model to build the UI in code, I started by asking it to show me the UI.

No code. Just structure. Layout. Visual intent.

“Create a clean, enterprise-style interface with a left navigation tree, a central chat window, and a right-side task panel.”

Then I iterate.

“Move this.”

“Remove that.”

“Make this tighter.”

“Stretch this section.”

Suddenly, the feedback loop collapses from minutes to seconds.

I’m no longer recompiling an application just to evaluate spacing. I’m no longer guessing how the model interpreted my instructions. I can see it immediately, adjust it immediately, and converge quickly.

Why This Actually Works

What I’ve found—both from experience and from broader product development practices—is that this approach aligns much more closely with how humans process design.

We’re visual.

We can evaluate a layout in seconds in a way that would take paragraphs to describe. When you try to encode that evaluation into instructions first, you introduce friction, ambiguity, and drift.

By flipping the order—visual first, code second—you remove that ambiguity almost entirely.

It also compresses the instruction layer. Instead of writing hundreds of tokens describing layout behavior, I can simply point to a mockup and say:

“Build this.”

That’s a fundamentally different interaction model with AI.

Applying This to My Current Project

In my current build—“One Desktop Client To Rule Them All”—this became unavoidable.

After weeks of layering instruction sets and refining behavior, I realized I wasn’t stuck because of complexity in the system. I was stuck because the UI wasn’t aligning with the vision, and every attempt to fix it in code was compounding the problem.

I had a choice.

Keep iterating the same way and hope it converges.

Or reset the UX workflow entirely.

I reset it.

Now, every UI decision starts as a mockup. I iterate until it feels right. Only then do I move into implementation.

The Difference Is Immediate

The results were obvious almost immediately.

The UI improved—not incrementally, but noticeably.

Iteration time dropped significantly.

Frustration around UX essentially disappeared.

And perhaps most importantly, I stopped trying to “out-instruct” the model.

Instead, I started collaborating with it visually.

Final Thought

Vibe coding didn’t fail me.

I just misunderstood where it needed structure and where it needed a different approach.

AI isn’t just a coding assistant. It’s a thinking tool. A design tool. A way to externalize ideas quickly and refine them without friction.

Once I stopped forcing UX through code and started treating it as something to prototype first, everything changed.

UX didn’t become my strength overnight.

But it stopped being my downfall.