TL:DR

Vibe coding without structure feels fast—but it quietly compounds architectural chaos, intent drift, and technical debt until fixing becomes slower than rebuilding. The solution is not abandoning vibe coding, but constraining it with discipline: start with a PRD, break work into phase-based instruction sets, use proper orchestration with defined agent roles, and enforce continuous code review.

At the same time, cost matters. Iterative agent-driven development on platforms like OpenAI and Anthropic can quickly become expensive due to repeated runs and large context usage. Running a local stack (LM Studio + Qwen3-Coder-Next) shifts that model—slightly slower (~10%), but comparable output quality and zero token costs.

The takeaway: if your project is spiraling in complexity, restarting with structure and a controlled local environment is often faster—and more scalable—than continuing to patch a broken foundation.

There is a point in every AI-assisted project where you realize you are no longer building the product.

You are managing the consequences of previous prompts.

That is the dark side of vibe coding. At the beginning, it feels like a superpower. The model is fast, the code appears, the dopamine hits, and suddenly you are “shipping.” Then three days later you discover your app has four competing state models, two authentication ideas, a mystery utility folder, and a function named final_final_really_fixed_v2.

Vibe coding without structure creates a very specific kind of debt. It is not just technical debt. It is intent debt. The model only knows what you most recently emphasized, so every new instruction can quietly weaken an earlier architectural choice. One prompt optimizes speed. The next optimizes elegance. The next optimizes compatibility. The next adds a feature “real quick.” Before long, the codebase is not a system. It is a historical record of your mood.

That is why fixing can become slower than restarting. Once the architecture has drifted too far, every patch is working against unseen assumptions. You are not fixing one bug. You are litigating a thousand tiny prompt decisions.

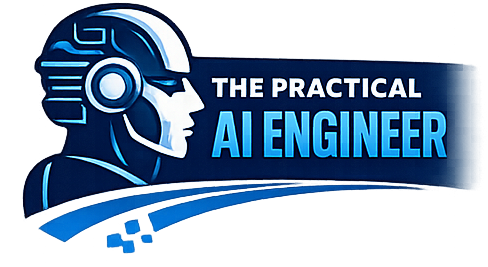

What actually works better for me is vibe coding with structure:

PRD → Phase Instruction Sets → Proper Orchestration and Agents → Constant Code Review

That sequence matters.

The PRD comes first because the model needs a source of truth bigger than the last message. A proper product requirements document forces scope, constraints, user flows, non-goals, and success criteria into one place. Then phase instruction sets turn the project into bounded chunks instead of one giant “build the whole thing” hallucination buffet. Orchestration comes next, because planning, implementation, validation, and refactoring are not the same job and should not all be shoved into one giant context window. Finally, constant code review is the guardrail that stops drift before it hardens into architecture. That review loop is where you catch nonsense early, normalize patterns, reject clever garbage, and keep the repo readable by future-you — who is usually less impressed than past-you.

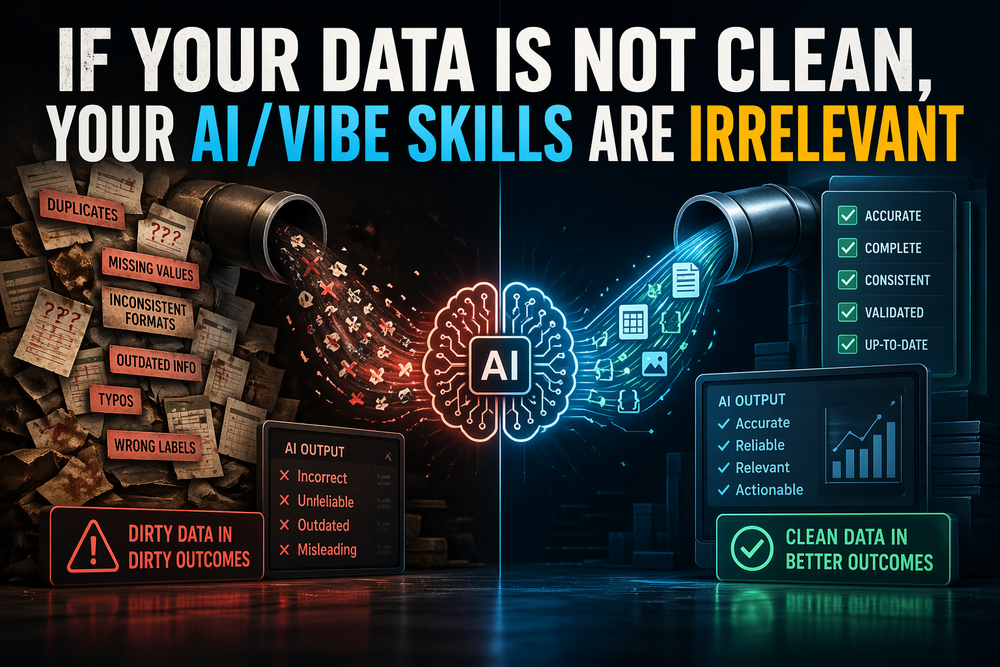

The cost side makes this even more obvious. As of April 2026, OpenAI’s public API pricing lists GPT-5.4 at $2.50 input and $15 output per million tokens, GPT-5.4 mini at $0.75 input and $4.50 output, plus separate charges for tools like web search and container sessions. Anthropic’s API pricing puts Claude Sonnet 4.6 at $3 input and $15 output per million tokens and Claude Opus 4.6 at $5 input and $25 output, with prompt-caching writes costing 1.25x or 2x the base input rate depending on duration, and server-side tools able to add usage-based charges. None of that is unreasonable on its own. The problem is that agentic coding magnifies it. Long context, repeated retries, tool schemas, reviewer passes, and “just one more run” all compound into a real line item.

This is not an anti-cloud argument. It is an anti-unbounded-iteration-cost argument.

That is why the local model route has become so compelling to me. LM Studio lets you run a local server on localhost and exposes both OpenAI-compatible and Anthropic-compatible endpoints, which means existing tooling can often be redirected by changing the base URL instead of rebuilding the whole stack. Qwen3-Coder-Next is explicitly positioned for coding agents and local development; Qwen describes it as an 80B MoE model with 3B active parameters, native 256K context, and agentic coding results comparable to Claude Sonnet, while LM Studio lists 42 GB of minimum system memory for the smallest local package. In plain English: this is no longer a toy setup. It is a serious local coding stack.

In my own use, LM Studio with Qwen3-Coder-Next is roughly 10% slower for me than the frontier-cloud experience, but the outcome is close enough that I stop caring about the last bit of speed. The important difference is psychological and economic: I can let the model think, retry, refactor, and loop without hearing the faint sound of a token meter laughing in the background.

To be precise, this is not “free.” It just changes the cost model from variable token spend to fixed hardware spend. That matters. I would rather pay for infrastructure I control than keep turning exploratory development into a monthly usage surprise.

One of my next pet projects is testing whether a vLLM stack can squeeze even more performance out of this setup. vLLM is built around high-throughput serving, PagedAttention, continuous batching, and a broad quantization story; its current docs list formats like AWQ, GPTQ, INT4, INT8, FP8, GGUF, and quantized KV cache, and it also supports custom out-of-tree quantization plugins. TurboQuant, meanwhile, is recent research from Google on extreme compression for high-dimensional vectors and KV-cache-style workloads. So the vLLM + TurboQuant path is interesting to me precisely because it looks like something worth measuring, not a solved checkbox feature. The bigger idea is simple: architect an organizational LLM service for token-meter-free coding, then optimize the serving layer until the speed gap is small enough to stop mattering. Hardware still counts, obviously. GPUs do not accept payment in vibes.

And that brings me to my current main code project: One Desktop Client To Rule Them All.

After a few weeks of instruction set after instruction set, I started noticing a pattern. I was not getting closer to simplicity. I was getting buried in accumulated complexity. Each pass fixed something, but each pass also widened the system in a slightly different direction.

That is the trap.

AI makes it very easy to keep moving while quietly making the architecture worse.

So I have a choice. I can continue fixing the current pile, or I can do the more rational thing and start clean with the structure I should have enforced from day one: PRD first, phased instruction sets second, orchestration and agents third, code review throughout, and a local LLM at the center so the iteration loop can run without a token tax.

My bet — and I think it is the correct one — is that this gets the project to Version 1 testing in 3 to 4 days.

Not because restarting is magical.

Because once the system is structured, the model stops improvising architecture and starts executing it.

That is the real lesson for me.

Vibe coding is not the problem.

Unstructured vibe coding is.

When the repo is small and the goal is fuzzy, vibes are fuel.

When the repo gets serious and the product starts mattering, vibes need a chassis.

Sometimes starting over is not admitting defeat.

Sometimes it is just the fastest route back to reality.