TL:DR

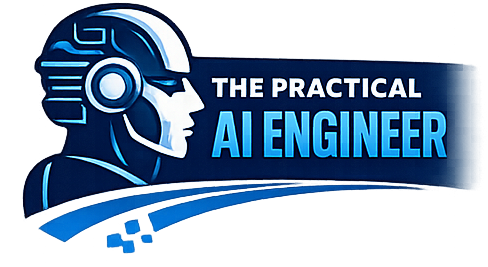

Vibe coding isn’t chaos—it’s sequencing.

If you generate code before defining scope, architecture, and release criteria, you’ll build a demo, not a product.

The winning formula:

Spec → Criteria → Architecture → CI/CD → One Vertical Slice → Hardening → Release

Everything else is rework.

Vibe coding has quickly become one of the most compelling ways to build software.

The promise is simple: describe what you want, let AI generate the implementation, and move at a speed that traditional development simply can’t match. And in many ways, that promise is real. You can move faster than ever before.

But speed without structure has a predictable failure mode.

Most teams—and especially individuals working in this style—don’t fail because the AI is bad. They fail because they start coding too early, without establishing the order of operations required to turn generated code into a working, releasable system. The result isn’t a product. It’s a pile of partially connected functionality that looks impressive at first and collapses under its own weight when you try to stabilize it.

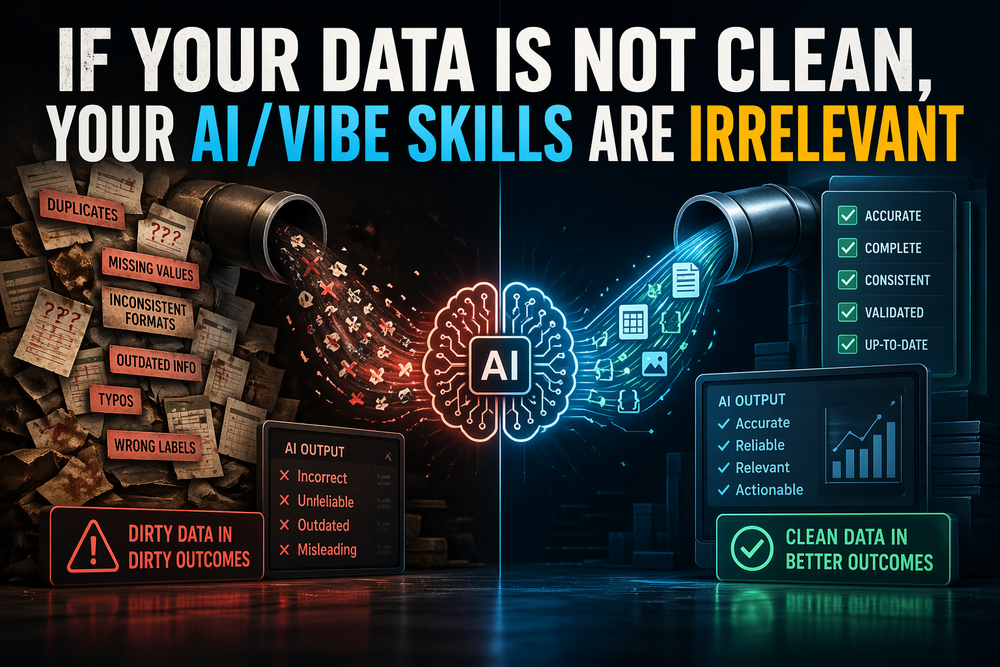

The core misunderstanding is this: vibe coding removes friction from implementation, not from decision-making. If anything, it amplifies the cost of poor decisions because you can now generate large amounts of incorrect or misaligned code extremely quickly.

The difference between shipping and stalling comes down to one thing—what you choose to do before you start asking the model to write code.

A releasable build always begins with clarity. Before a single function is generated, you need to define what the product is, who it is for, and what problem it solves. This sounds obvious, but in a vibe coding workflow it’s often skipped because it feels like overhead. Instead, people jump straight into prompts like “build me a dashboard” or “create a full-stack app that does X,” and the model obliges.

What the model produces in that moment is not wrong—it’s just unconstrained. Without a defined user, a bounded scope, and a clear definition of what “done” means, the system begins to drift immediately. Features expand. Assumptions multiply. Patterns diverge. And very quickly, you are no longer building toward a product—you are exploring a possibility space.

This is where acceptance criteria become critical. They are not documentation for documentation’s sake; they are the contract that defines success. In a traditional development environment, this role is often played by product managers, QA, or engineering leads. In vibe coding, that responsibility shifts directly to you. If you cannot clearly state what must work for the product to be considered complete, you have no way to evaluate what the AI is producing. Everything becomes “almost working,” which is another way of saying nothing is actually done.

Once scope and success criteria are defined, the next critical decision is architectural simplicity. This is where many otherwise strong builders introduce unnecessary risk. AI systems are exceptionally good at filling in gaps—but if you give them a complex, multi-layered architecture too early, they will begin to invent glue code, abstractions, and integration logic that may technically function but is difficult to reason about and even harder to maintain.

For a first release, simplicity is not a preference—it is a survival strategy. One application, one database, one deployment path. Anything more than that should be justified by necessity, not possibility.

What separates successful vibe coding projects from failed ones is what happens next. Most people begin building features at this point. The correct move is the opposite: build the system that will support the features first.

This means setting up your repository structure, your testing framework, your environment configuration, and your deployment pipeline before meaningful feature work begins. It feels counterintuitive because none of this produces visible progress from a user perspective. But this is precisely the foundation that allows everything else to scale cleanly. Without it, you are effectively building on sand, and every new feature increases the likelihood that something will break in a way you cannot easily diagnose.

Only after this foundation is in place should you begin implementing functionality—and even then, not broadly. The most effective approach is to build a single, thin vertical slice of the product. One complete workflow, end to end. Input, validation, business logic, persistence, and output. Not every screen. Not every API. Just one real, usable path through the system.

This accomplishes something that no amount of partial implementation can: it proves that the system works as a whole. It also forces alignment across layers, which is where many AI-generated systems fail. When you build horizontally—UI first, then APIs, then database—you create integration problems. When you build vertically, those problems surface immediately and can be resolved while the system is still small.

At this stage, testing should be intentional, not exhaustive. The goal is not to achieve high coverage metrics; it is to validate that the core workflow behaves correctly and continues to behave correctly as changes are introduced. A small number of well-chosen tests will do more for release readiness than a large number of superficial ones.

Equally important—and frequently neglected—is observability. If your system fails and you cannot see why, you do not have a releasable product. Logging, error tracking, and basic health checks are not “nice to have” features; they are operational requirements. In a vibe coding environment, where the internal logic of the system may not be fully authored by you, visibility becomes even more critical.

Security follows the same pattern. AI-generated code often handles authentication, authorization, and input validation in ways that appear correct but contain subtle flaws. Treating security as something to “clean up later” is one of the fastest ways to delay or derail a release. It must be part of the build process, not an afterthought.

By the time you reach staging, the goal is no longer to prove that the system can run locally—it is to prove that it behaves correctly in a real environment. This includes deployment, data persistence, user flows, and failure handling. If the system only works on your machine, it does not work.

Finally, release should be controlled, not absolute. The first version of your product should reach a limited audience, with clear rollback mechanisms and monitoring in place. This is not caution for its own sake—it is what allows you to learn from real usage without exposing yourself to unnecessary risk.

The most common failure pattern in vibe coding is not technical incompetence. It is sequencing error. Building interfaces before systems, features before infrastructure, and complexity before validation leads to a codebase that grows quickly but stabilizes poorly. What feels like speed in the early stages becomes friction later, often at the exact moment you are trying to ship.

The insight that changes everything is simple: vibe coding does not eliminate the need for discipline—it shifts where that discipline must be applied. The structure that used to live in implementation now has to exist before implementation begins.

When you get the order right, AI becomes a force multiplier. It executes quickly, consistently, and with surprising depth. When you get it wrong, it accelerates confusion, amplifies inconsistency, and produces systems that are difficult to complete.

In the end, the difference between a demo and a product is not how much code you generate. It is whether that code was generated in the right order.

And in vibe coding, order is everything.