TL;DR

Vibe coding is great at creating momentum. It is much less reliable at preserving system integrity.

It compresses the time between rough intent and visible output. That matters. It can accelerate scaffolding, comparisons, refactors, boilerplate, documentation framing, and a surprising amount of early-stage implementation. But once the work expands into architecture, context management, model orchestration, packaging, observability, and security, the weaknesses become harder to ignore.

Recent builds made that lesson much clearer for me. The limiting factor was almost never whether the model could generate something plausible. The limiting factor was whether the surrounding workflow was strong enough to turn generated output into software I would actually trust to keep.

Vibe coding works brilliantly right up to the point where the software has to behave like a product.

That is the part I keep coming back to.

It is easy to be impressed when AI can scaffold a UI, wire up a service layer, sketch a schema, or turn a rough description into something that runs. I have seen that benefit repeatedly. Across recent work on desktop AI coding tools, mixed local and SaaS model workflows, agent-style development systems, context backends, packaging automation, and even ideas around mobile visibility or control for coding environments, AI has been very good at reducing the distance between idea and first implementation.

But the more of those systems I pushed beyond the prototype phase, the less I thought the real question was, “Can AI write code?”

The real question became: can an AI-assisted workflow help me build software that remains coherent once the project has state, trust boundaries, release constraints, packaging requirements, and real operating conditions?

That is where vibe coding stops being a novelty and starts becoming a serious engineering question.

Why This Became Clearer in Recent Builds

A lot of the public conversation around vibe coding still lives at the level of isolated features. Generate a page. Build a component. Connect an API. Mock a tool. That is the part AI handles well enough that people start thinking the whole development process has changed in a simple, linear way.

I do not think it has.

Once you move into developer-facing products, desktop AI applications, hybrid local/SaaS model stacks, agent coordination, context persistence, build pipelines, installer flows, or remote visibility, the standard changes. The problem is no longer whether code can be generated. The problem is whether the generated pieces agree with each other, survive real constraints, and stay understandable after multiple rounds of iteration.

That sounds like a subtle distinction. In practice, it is not.

A generated screen can look polished while hiding weak assumptions about state. A generated backend can appear complete while quietly ignoring failure handling. A generated architecture can sound clean while locking you into abstractions that will become expensive later. A model can be locally impressive and systemically shallow at the same time.

That is where most of the real learning has happened for me.

Where Vibe Coding Earned Its Place

The upside is real, and I do not think it should be minimized.

The first gain is startup velocity. AI reduces blank-page friction better than any other tool I have used in development. It helps turn rough intent into something tangible quickly. That is useful in coding, product framing, interface shaping, and workflow design.

The second gain is breadth-first exploration. It is easier to inspect multiple candidate directions before committing. That matters more than people think. A lot of good engineering decisions start as comparative work, not immediate certainty. AI is useful as a generator of options, not just implementations.

The third gain is repetitive execution. Once the task is constrained well enough, AI can save meaningful time on boilerplate, transformation logic, repetitive refactors, formatting, and documentation scaffolding. Those are not glamorous wins, but they are real wins.

The fourth gain is momentum preservation. Small chores do not kill flow the way they used to. If I need a first pass at a settings panel, an ingestion routine, a wrapper around a model call, or a refactor into clearer modules, AI can often get me moving again without the usual restart cost.

I also noticed this in mixed local and SaaS workflows. Local models made experimentation cheaper and more disposable. Frontier APIs still tended to win when I needed stronger reasoning or better long-context handling, but once I had both options available, I became less interested in finding a single perfect tool and more interested in composing a workable development loop.

That has probably been the most practically useful shift.

Where It Started Lying to Me

The failure pattern was rarely that the model could not produce code.

The failure pattern was that the code stopped being trustworthy once the surface area widened.

1. Coherence breaks before output quality breaks

This is one of the most important things I have learned.

A model can produce individually plausible files for a long time before the system starts drifting. Naming conventions diverge. State handling becomes inconsistent. One layer assumes a local workflow while another quietly assumes a cloud-native one. The UI suggests confidence that the lifecycle underneath it does not justify.

Nothing looks obviously wrong in isolation.

The system is what starts degrading.

2. Context is not a support detail. It is central.

The more I worked with agent-style flows and structured instruction files, the more obvious it became that context design is one of the real engines underneath successful AI-assisted development.

Without strong boundaries, the model carries assumptions across tasks, roles, or sessions. That contamination gets misread as randomness, but it usually is not random. It is state leakage.

In other words, a lot of bad vibe coding is not really a generation problem. It is a context management problem.

This is also where AGENTS.md-style structure became more interesting to me. The value was not that “agents” sounded advanced. The value was that explicit roles, boundaries, and handoffs made the work more legible. When those handoffs were vague, multiple agents just created multiple confident ways to be wrong.

3. Integration work exposes shallow reasoning fast

It is easy to generate a feature. It is harder to generate correct behavior once that feature depends on authentication, file access, document handling, retries, background processes, persistent state, model selection, or environment constraints.

That is where AI output often becomes dangerous in a very ordinary way: it is almost right.

Not clearly broken. Not clearly correct. Just convincing enough to accept too quickly and expensive enough to unwind later.

I have seen this pattern show up especially when the work includes local file flows, context backends, model orchestration, or anything that starts behaving like a real system instead of a neat demo.

4. Packaging and release reality are brutal

This became clearer the moment the output had to become installable, versioned, supportable, or observable.

A system that works in a dev session is not the same as a system that can be packaged cleanly, deployed predictably, monitored sensibly, or recovered when it fails. Desktop behavior, dependency handling, installer logic, environment assumptions, and release workflows expose the gap between “the code exists” and “the product works.”

That gap is where a lot of AI confidence goes to die.

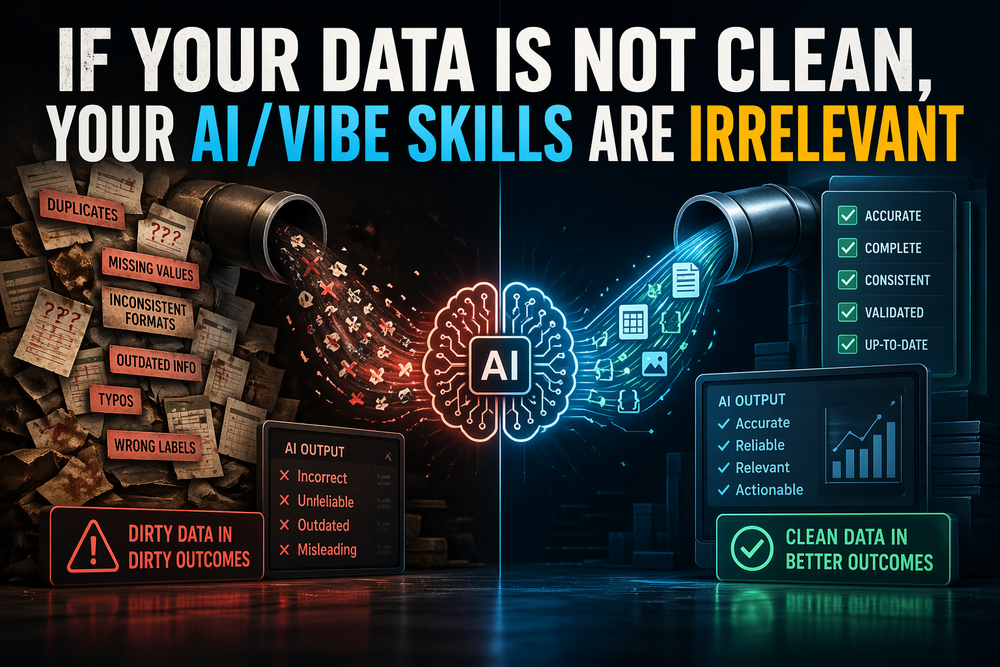

5. Security debt arrives much earlier than people admit

This matters even more in AI-heavy systems.

Anything that touches local files, scanned documents, code execution, remote visibility, or external integrations creates trust and security questions immediately. AI-generated code is often good at producing the outer shape of security. It is much less reliable at producing a security model I would trust without inspection.

Authentication, authorization, validation, input handling, and boundary control are classic examples of code that can look fine while being subtly weak.

That is not a cleanup issue.

That is a build issue.

6. Observability becomes non-negotiable

The moment I started thinking about mobile visibility or control for a desktop AI coding environment, the entire conversation changed. It was no longer “Can the model generate a control surface?” It became:

What exactly am I exposing?

What state is visible?

What happens on network failure?

What permissions are implied?

What can be triggered remotely?

What logs exist when something goes wrong?

Those are not secondary questions. They are the real questions.

And once those questions show up, vibe coding by itself is not enough.

What Changed in My Process

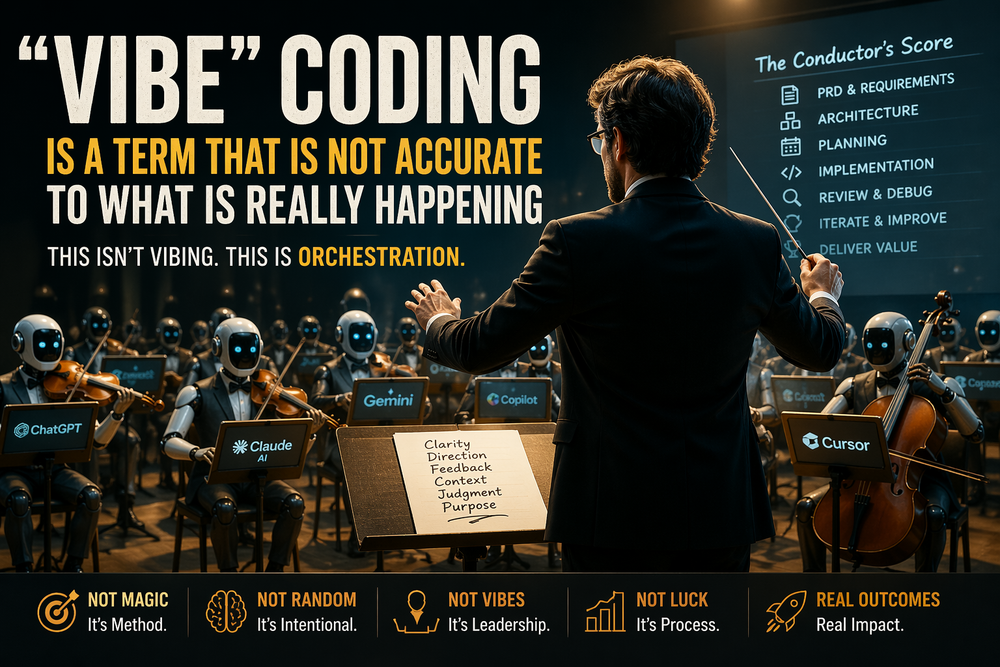

The biggest shift in my thinking is that I stopped treating vibe coding as a prompt problem and started treating it as a workflow design problem.

That changed almost everything.

I now separate exploratory prompting from implementation prompting much more aggressively. Early exploration can stay loose. Build work cannot. Once something is headed toward the actual product, I want tighter scope, clearer role definition, explicit constraints, and a much more concrete definition of done.

I also care far more about thin vertical slices than broad horizontal generation. I would rather prove one real path end to end than generate five partially connected layers that only look complete. A real workflow forces alignment across UI, logic, state, persistence, validation, and failure handling. That is where the truth shows up.

Context discipline matters more too. Scoped sessions, structured instruction sets, explicit boundaries, and resets between materially different tasks are not bureaucratic habits. They are how I keep the model from dragging assumptions into places they do not belong.

Observability has moved earlier in the process. Logging, health signals, job visibility, error paths, and basic operational checks are not late-stage polish in AI-assisted systems. They are part of how I establish control over something that was partially machine-generated in the first place.

And I pull packaging and operational questions forward now. How will this ship? What does it assume about the environment? What happens when it fails outside my machine? What needs to be visible before I call it usable? Those questions used to feel downstream. In practice, they need to show up much earlier.

What Vibe Coding Actually Looks Like to Me Now

I no longer think vibe coding means building from pure instinct.

I think it means conversational acceleration sitting on top of hidden structure.

When it works, it usually works because rigor already exists upstream, whether people call it that or not. There is a build brief. There are scoped constraints. There is an instruction model. There is a review habit. There is some notion of what release readiness means. There is a line between exploratory output and accepted implementation.

The interaction feels fluid because the structure is already doing the hard work.

When it fails, it is usually because the conversation is being asked to replace the structure rather than express it.

That is the distinction I trust now.

The more real the product becomes, the less useful “just build it” prompting becomes. At that point, the winning move is rarely more generation. It is better framing, better boundaries, and better review.

What I Would Tell Anyone Building This Way

Use vibe coding for acceleration, not abdication.

Let AI help with exploration, scaffolding, comparison, routine implementation, and low-leverage friction. That is where it is strongest.

Do not let it define the system by default.

Be explicit about architecture before the codebase spreads.

Be explicit about context before agents multiply.

Be explicit about release criteria before a demo starts pretending to be a product.

Be explicit about observability before failures become mysterious.

Be explicit about security before local access, document flows, or control surfaces show up.

And judge the workflow, not the individual response.

A single good answer from a model means very little if the surrounding process cannot reliably turn that answer into software that is testable, supportable, and worth keeping.

Takeaways

- Vibe coding is strongest at reducing the cost of exploration, not eliminating engineering judgment.

- The bigger the system surface area, the more the real problems shift toward context, architecture, integration, and release behavior.

- Agent workflows and structured instruction files help only when their handoffs and boundaries are explicit.

- Desktop apps, local/SaaS model orchestration, packaging flows, and observability needs expose weaknesses that simple demos hide.

- Security cannot be treated as a later cleanup phase in AI-generated systems.

- Good AI-assisted development feels fast because rigor has already been pushed upstream.

- The future of usable vibe coding is not less engineering. It is more front-loaded engineering expressed through better language, better constraints, and better workflow design.

Final Thought

Recent builds have not made me less interested in vibe coding.

They have made me more precise about what it is for.

It is excellent for getting moving.

It is excellent for widening the search space.

It is excellent for removing friction from early implementation.

It is not a replacement for system design.

It is not a replacement for security thinking.

It is not a replacement for release discipline.

And it is definitely not a replacement for deciding what belongs in the product in the first place.

That is where I have landed, at least for now.

The more real the software becomes, the less vibe coding can survive as a vibe.

At that point, it either matures into an engineering process or it collapses into a demo