TL;DR

GePT-AI Studio started as an AI desktop-client idea and matured into a much bigger thesis: enterprises do not need another chat wrapper, they need a governed desktop control plane for AI work.

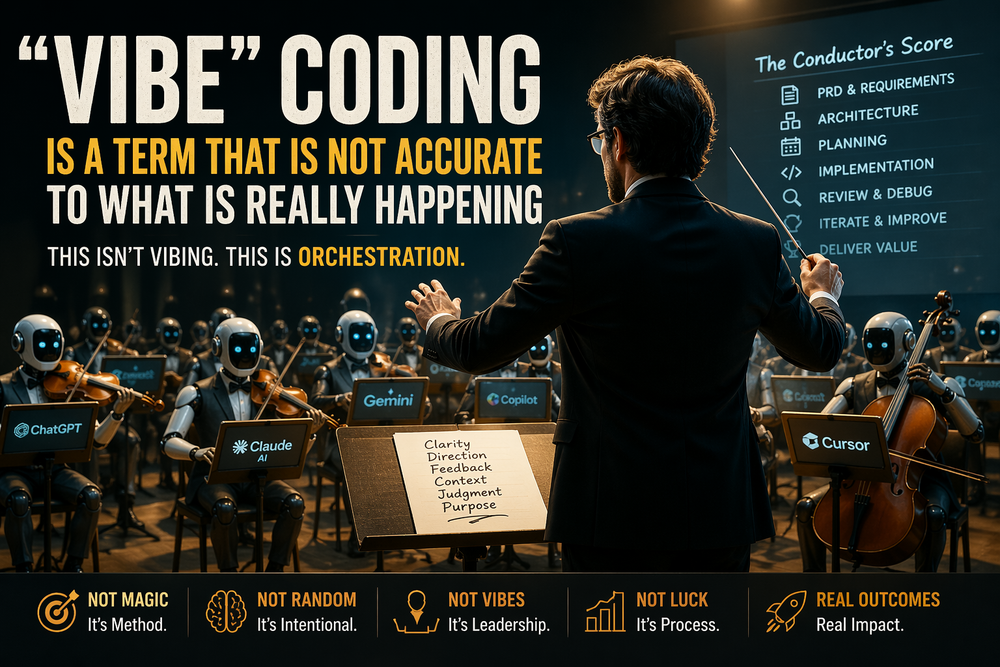

The biggest wins came from treating AI development as a PRD and workflow problem, using structured orchestration instead of loose vibe coding, shifting UX into visual prototyping first, and designing for organizational memory rather than individual sessions.

The biggest failures came from architectural drift, trying to fix UX in code, and underestimating how central governance, approvals, security, and context boundaries would be.

The core lesson is simple: enterprise AI clients need governed extensibility, policy-aware model routing, approval-aware workflows, secure artifact handling, auditable logs, organizational memory, and agent creation that non-technical teams can actually use.

The more time I spend building GePT-AI Studio, the less interested I become in the usual AI desktop-client checklist.

Model access matters. Nice UX matters. Plugins matter. Local-model support matters.

But those are not the real problem.

The real problem is that most AI desktop clients are still built like personal productivity tools, while the environments they are heading into are organizational systems. That gap sounds small until you try to close it. Then it becomes obvious that an enterprise AI desktop client is not really a chat app problem. It is a control-plane problem.

That realization did not arrive all at once. It came through a series of wins, failures, and uncomfortable lessons.

At the start of this journey, I was learning the same thing many builders are learning right now: AI is genuinely useful, but it does not remove the need for judgment. In some ways it raises the bar. The faster a model can generate code, the faster it can also generate drift, confusion, and technical debt when the problem is not framed properly. That was one of the first real shifts in my thinking. Vibe coding was not magic. It was clarity, structure, and review wearing the mask of speed.

That changed how I approached the project.

I stopped treating prompts as the main lever and started treating the instruction set and the PRD as the actual operating system for the build. The prompt is the conversation. The instruction set is the behavior. The PRD is the source of truth. Once I accepted that, I got my first meaningful win: the work became more coherent. Features stopped feeling like isolated AI-generated tricks and started behaving more like parts of a product.

But that was only the first lesson.

The second lesson was harsher: unstructured vibe coding compounds chaos faster than traditional development compounds debt. When the workflow gets loose, the codebase stops reflecting architecture and starts reflecting the history of your last ten prompts. That is where I learned that sometimes starting over is not a failure. Sometimes it is the first rational decision you have made in a week.

That was one of the clearest failures in my own progression. I had stretches where I was no longer building the product. I was managing the side effects of previous instructions. The fix was not “ask the model better questions.” The fix was to impose sequencing and discipline:

PRD first.

Then phase-based instruction sets.

Then orchestration.

Then review.

Then hardening.

That sounds less romantic than “just vibe it into existence,” but it works far better when the software has to survive beyond a demo.

The next failure was more personal: UX.

Historically, UX has been my weakest point in this kind of work. I can think in systems. I can define workflows. I can reason about orchestration, policies, approvals, and integrations. But translating interface intent into code through pure text was burning time and compounding frustration. I kept trying to solve a visual problem in a coding medium.

That was backwards.

One of the biggest improvements in GePT-AI Studio came when I stopped trying to code the UI first and started prototyping it visually first. Once I used AI as a mockup and interaction-design tool before using it as a code-generation tool, the quality of iteration changed immediately. The UI improved faster, the feedback loop collapsed, and I stopped trying to out-prompt a fundamentally visual problem.

That lesson mattered because enterprise software does not get judged only on capability. It gets judged on whether people will actually adopt it. A system that is powerful but awkward is not enterprise-ready. It is just expensive potential.

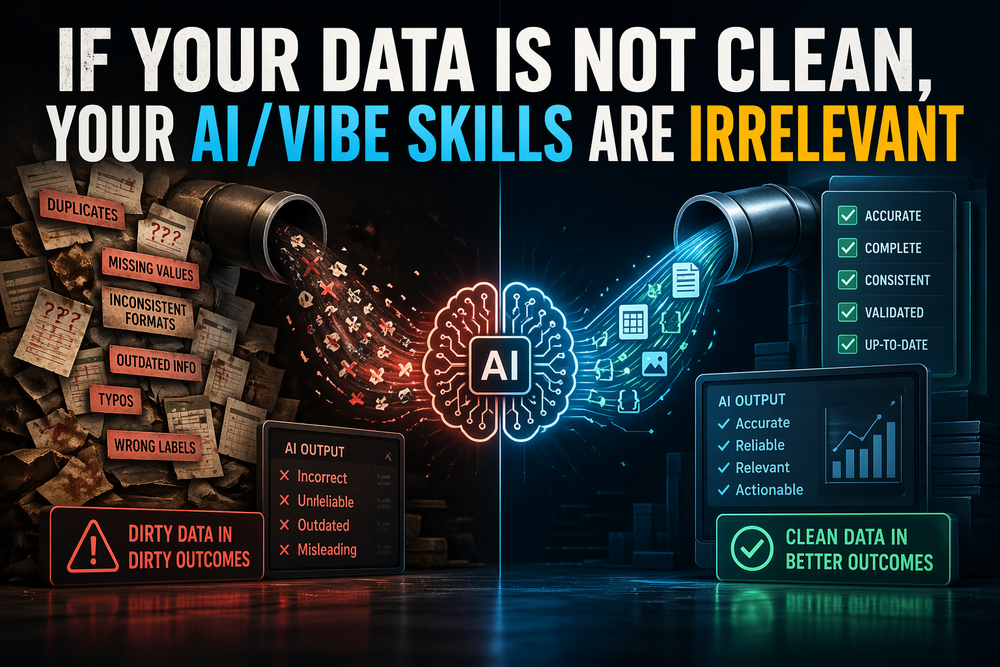

Around the same time, another shift became obvious: model access is not the same as model strategy.

A lot of tools can connect to multiple providers now. That is no longer a differentiator by itself. An enterprise client needs a policy-aware model strategy. It needs to know when work should stay local, when hosted inference is acceptable, which agents can touch which data, when approvals are mandatory, and how cost, latency, privacy, and capability are traded off. A dropdown full of model names is not governance. It is just a menu.

This is one reason GePT-AI Studio moved toward a deeper enterprise posture. The goal stopped being “one more AI desktop client” and became “a desktop layer that can govern how AI work actually happens.” That means agents, plugins, orchestration, approvals, context management, and system controls all have to live under the same operating logic. In an enterprise setting, those cannot be separate ideas. They are the product.

That also led to one of the biggest wins in the project: realizing that organizational memory matters more than personal convenience.

Most current tools still assume one advanced user, one session, one workflow, one context window, one operator. That is fine for acceleration. It is weak for institutional adoption. Enterprises need memory that belongs to the organization, not just the person at the keyboard. They need reusable behavior, shared context, approved logic patterns, and workflows that survive staff changes. If the system only works because one power user knows the right incantation, then it does not work. It is fragile.

This is where the product thesis behind GePT-AI Studio sharpened.

An enterprise AI desktop client actually needs governed extensibility. Not just plugins, but versioned, reviewable, policy-aware extensions.

It needs workflow logic as a first-class object. Not hidden prompt rituals, but reusable templates, escalation paths, approval checkpoints, and auditable decision boundaries.

It needs context management that respects boundaries. Not just long chats, but scoped memory, project memory, team memory, and clear separation between what should persist and what should not.

It needs hybrid model orchestration. Local where privacy, cost, or repeatability matter. Hosted where frontier capability is worth the trade.

It needs security built into the artifact lifecycle. Skills, agents, MCP packages, templates, plugin bundles, and updates should be treated like untrusted inputs until they are explicitly cleared. In enterprise environments, “download and trust” is not a feature. It is a breach path.

And it needs an agent-builder experience that does not assume the user wants to become an AI engineer.

That last point is one of the most important lessons in the whole project.

The future of enterprise AI is not giving every business stakeholder a prompt box and hoping they become technical. The future is giving them guided, governed ways to define useful digital teammates. That means templates, plain-language configuration, preview and testing, permissions, escalation rules, autosave, draft recovery, analytics, and lifecycle controls. If creating an agent requires too much technical fluency, the business remains dependent on engineering for every iteration. That kills scale.

So when I look back at the progression of GePT-AI Studio, I do not see a straight line. I see a product getting more honest.

The wins were real: better structure, better iteration, better UX workflow, clearer product thinking, stronger enterprise framing.

The failures were just as real: architectural drift, over-reliance on loosely structured AI generation, treating UX like a coding problem, and underestimating how central governance and security would become.

But the lessons are the valuable part.

I learned that enterprise AI clients do not fail because they lack model access. They fail because they mistake features for operating principles.

They fail when extensibility is local instead of governed.

They fail when memory is personal instead of organizational.

They fail when agents are powerful but unmanageable.

They fail when security is layered on later.

They fail when UX remains an afterthought.

They fail when the people who understand the work cannot safely shape the automation.

GePT-AI Studio exists because of those lessons.

What I am building now is not a prettier wrapper around LLM APIs. It is an attempt to turn AI desktop work into something more durable: governed, repeatable, secure, collaborative, and actually usable in an enterprise environment.

That is what an enterprise AI desktop client actually needs.

And it took a few wins, a few failures, and a lot of rethinking to understand that clearly.