TL;DR

Most AI products still treat memory like a better transcript. That is not enough for enterprise work.

In my Vibe coding projects, and especially while shaping GePT-AI Studio into something closer to an enterprise AI control plane than a simple desktop chat client, I kept running into the same problem: chat history preserves conversation, but not operational intent.

Enterprise AI needs project-level memory with clear scope and persistence. That means:

- memory tied to a specific project, not a generic user profile

- durable context built from PRDs, decisions, release notes, and approved artifacts

- continuity across long-running builds, multiple sessions, multiple agents, and even team handoffs

- strict boundaries around what is remembered, where it lives, and who can use it

The difference is simple: chat history helps an AI remember what was said; project memory helps it remember what is being built.

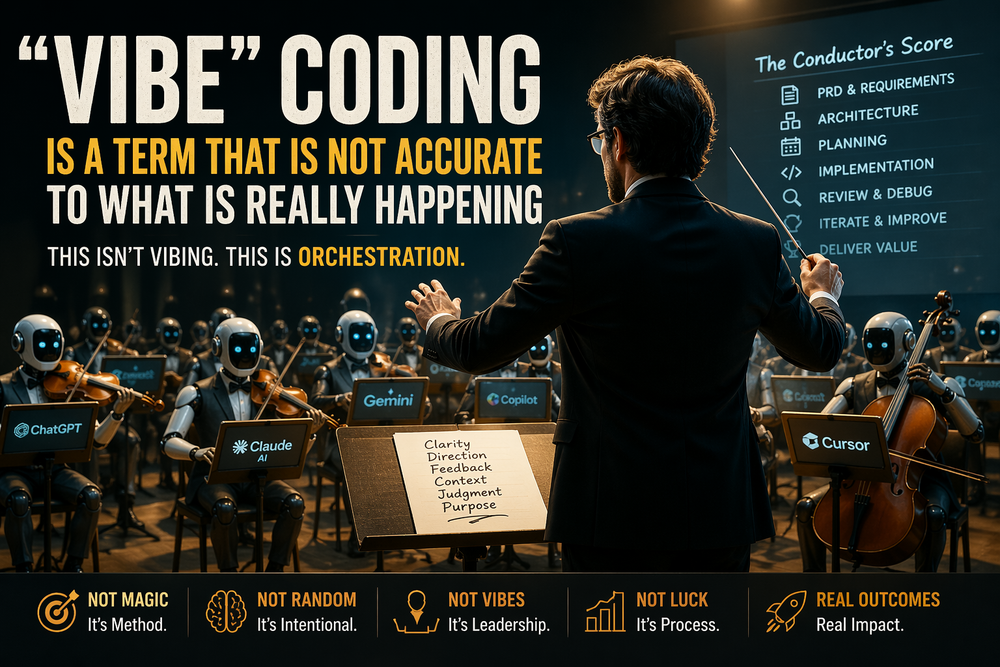

The hard lesson from Vibe coding

One of the most useful things about Vibe coding is also one of its biggest traps: it feels like progress.

You can go from idea to interface to prototype very quickly. You can prompt your way into a feature, refine it in place, and get something that looks real. That speed is valuable. I have used it heavily across my projects.

But once a build starts lasting longer than a single session, the weaknesses show up fast.

The AI remembers the recent thread. It remembers the last few requests. It may even sound consistent. But it does not reliably remember:

- the original product goal

- the current phase of the build

- what tradeoffs were already made

- which assumptions were rejected

- what shipped last

- what changed in the architecture

- what is approved vs experimental

- which context belongs to this project and not another one

That gap is where drift begins.

In practice, I found that when AI worked mainly from chat history, it optimized for the latest prompt, not the real project. It responded fluently, but it lost the build thread. Features started to bend toward whatever had just been discussed. UX decisions got revisited. Architecture changed without intent. Scope crept. The system sounded smart while becoming less reliable.

That is why I stopped thinking about memory as a convenience feature and started thinking about it as execution infrastructure.

Chat history is not the same thing as memory

A chat transcript is a record of interaction. That has value. But enterprise delivery requires something else: a structured, scoped memory model that survives beyond the conversation.

Here is the practical difference:

| Need | Chat History | Project-Level Memory |

|---|---|---|

| Recall what was discussed | Good | Good |

| Preserve product intent | Weak | Strong |

| Survive long build cycles | Fragile | Durable |

| Support team handoffs | Manual | Built in |

| Track decisions and changes | Buried in messages | Explicit artifacts |

| Govern access and scope | Opaque | Scoped and inspectable |

| Coordinate multiple agents/tools | Unreliable | Structured |

A transcript is linear. A project is not.

Projects have artifacts, phases, approvals, dependencies, release states, and trust boundaries. If the AI cannot anchor itself to those things, it becomes a session assistant, not a delivery system.

Enterprise work happens inside projects, not conversations

This became especially clear while thinking through what an enterprise AI workstation or desktop client actually needs.

In consumer AI, “memory” often means, “remember my preferences.” In enterprise AI, memory has to answer much harder questions:

- Which project am I in?

- What is the current PRD?

- What is the accepted architecture?

- Which tools or plugins are approved for this workspace?

- What changed in the last release?

- What is still in draft?

- Which instructions are temporary, and which are now policy?

- What should persist across sessions, and what should expire?

That is a fundamentally different design problem.

In GePT-AI Studio, the requirement quickly stopped being “add better chat history.” The real requirement became:

Give the AI a bounded project context with durable artifacts, clear permissions, and continuity across the full build lifecycle.

That means the memory model has to be scoped by object, not just by user.

What project-level memory should actually include

For enterprise AI, the most useful memory is usually not raw message recall. It is a set of persistent project anchors.

1. The PRD as the primary intent anchor

A good PRD is not just documentation. It is the most important memory object in the project.

It tells the AI:

- what problem is being solved

- who the user is

- what success looks like

- what is in scope

- what is explicitly out of scope

- what constraints matter

Without that anchor, every new session becomes a renegotiation of intent.

In my own workflow, the more reliable pattern became:

PRD → phased instruction sets → orchestration → review → hardening

That sequence reduced drift because each step left behind durable context for the next one. The PRD was not just a planning artifact. It was the foundation the AI kept returning to.

2. Decision logs

Enterprise builds produce a constant stream of tradeoffs:

- local vs hosted model

- plugin-based vs built-in tool integration

- visual-first UX workflow vs code-first iteration

- autonomous behavior vs human approval checkpoints

If those decisions live only in the chat thread, they are easy to lose and easy for the AI to accidentally reverse.

A lightweight decision log gives the system something far more stable than “I think we talked about this.”

3. Release notes

Release notes are underappreciated as memory infrastructure.

They do more than summarize what shipped. They tell the AI:

- what changed

- what assumptions are no longer true

- what has been stabilized

- what is still experimental

- what needs regression attention

That matters because long-running builds are full of stale assumptions. If the AI does not know what changed between v0.8 and v0.9, it will keep proposing work based on an obsolete model of the system.

4. Approved artifacts and trust state

Enterprise AI is not just working with text. It is working with artifacts:

- plugin bundles

- skills

- agent templates

- MCP integrations

- UX specs

- architecture docs

- prompt packs

- deployment notes

These should not all be treated equally.

Some are drafts. Some are approved. Some are untrusted. Some are deprecated. Project memory should carry that state forward. Otherwise the AI may confidently build on top of the wrong artifact.

5. Working summaries for continuity

Long projects need concise, durable summaries that can be reloaded at the start of a session.

Not “summarize the whole chat.”

Not “remember everything forever.”

Instead:

- current objective

- current phase

- open decisions

- known risks

- latest shipped changes

- next recommended actions

That is the kind of continuity that keeps a build moving.

Scoped memory matters as much as persistent memory

One of the biggest mistakes in AI product design is treating memory as a single bucket.

Enterprise systems need layers.

Session memory

What matters right now in the active conversation.

Project memory

The durable context for this specific product or initiative: PRD, decisions, release notes, architecture state, approved tools, and open work.

Team memory

Shared conventions, workflows, approvals, standards, and collaboration patterns.

Organization memory

Policies, compliance rules, security constraints, governance requirements, and reusable playbooks.

Those scopes should not collapse into one another.

A design discussion in one project should not silently bleed into another. A temporary experiment should not become organization-wide memory. A personal preference should not overwrite a team standard.

The point is not just persistence. The point is containment.

That is what makes memory usable in enterprise settings.

Why this matters even more for long-running builds

The longer a project runs, the more dangerous shallow memory becomes.

Long-running builds usually involve:

- multiple sessions over days or weeks

- changing priorities

- evolving architecture

- several agents or tools

- human review loops

- restarts after failed attempts

- handoffs between people

This is where “just use the chat history” breaks down.

A transcript does not provide durable continuity. It provides narrative continuity. That is not the same thing.

For real build continuity, the AI needs to know the project’s stable frame even when:

- the thread changes

- the model changes

- the operator changes

- the task changes

- the build pauses and resumes later

That is why project memory should outlive the session. It should also outlive the person who happened to be driving the keyboard that day.

In enterprise environments, continuity is not just about convenience. It is about organizational memory.

The product-management reason this matters

From a product perspective, project-level memory is how you embed product ownership into the AI workflow.

Without it, the AI behaves like a very fast responder.

With it, the AI starts behaving more like a system that understands the product boundary it is operating inside.

That changes the quality of work dramatically.

It means the AI can:

- generate work that stays aligned to the PRD

- preserve out-of-scope boundaries

- produce release notes from actual project context

- resume work without re-deriving intent

- support audits and reviews with traceable reasoning inputs

- reduce “prompt churn” across long delivery cycles

This is also why enterprise AI needs more than clever orchestration. It needs memory with product structure.

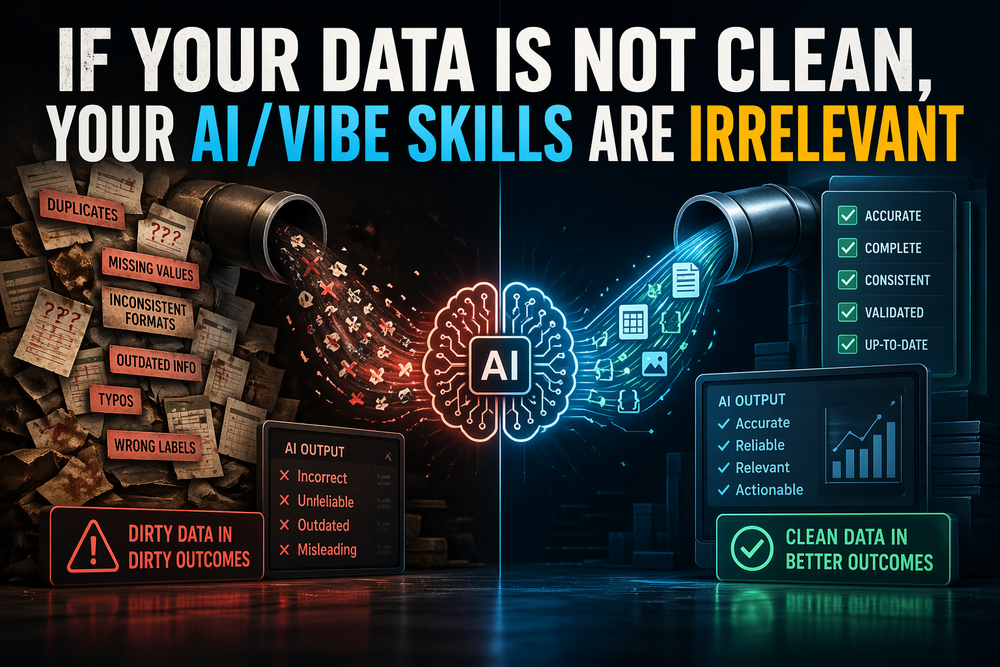

The best orchestration layer in the world still degrades if the AI is operating on thin, unstable context.

What I would build into enterprise AI by default

If I were defining the default memory model for an enterprise AI workstation today, it would include:

- Per-project context containers

Each project gets its own memory boundary, artifacts, summaries, and access controls. - PRD-linked memory

The AI should always be able to resolve the active PRD and current scope before generating major work. - Decision and release logs

Changes should be tracked as durable memory, not buried in chat. - Explicit persistence controls

Users should know what is session-only, what is project-persistent, and what is shared upward. - Inspectable summaries

Memory should be readable, editable, and resettable. - Artifact-aware retrieval

The AI should retrieve the right project docs, plugin states, and instructions, not just semantically similar text. - Governed trust boundaries

Approved, draft, deprecated, and untrusted artifacts should not be mixed together.

That is not an enhancement layer. That is core product architecture.

Final thought

The more I work on AI products, the less I believe memory should be treated as a personality feature.

For enterprise systems, memory is not mainly about personalization. It is about continuity, scope control, and execution fidelity.

Chat history is useful. But it is only the conversational residue of the work.

The real work lives elsewhere: in the PRD, in the decision trail, in release notes, in approved artifacts, in project boundaries, and in the continuity that lets a build survive across sessions, tools, and people.

That is why enterprise AI needs project-level memory.

Not because chat history is bad.

Because chat history alone does not know what the project is trying to become.